Originally published in Power Systems Design on April 21, 2026. Read the full story here.

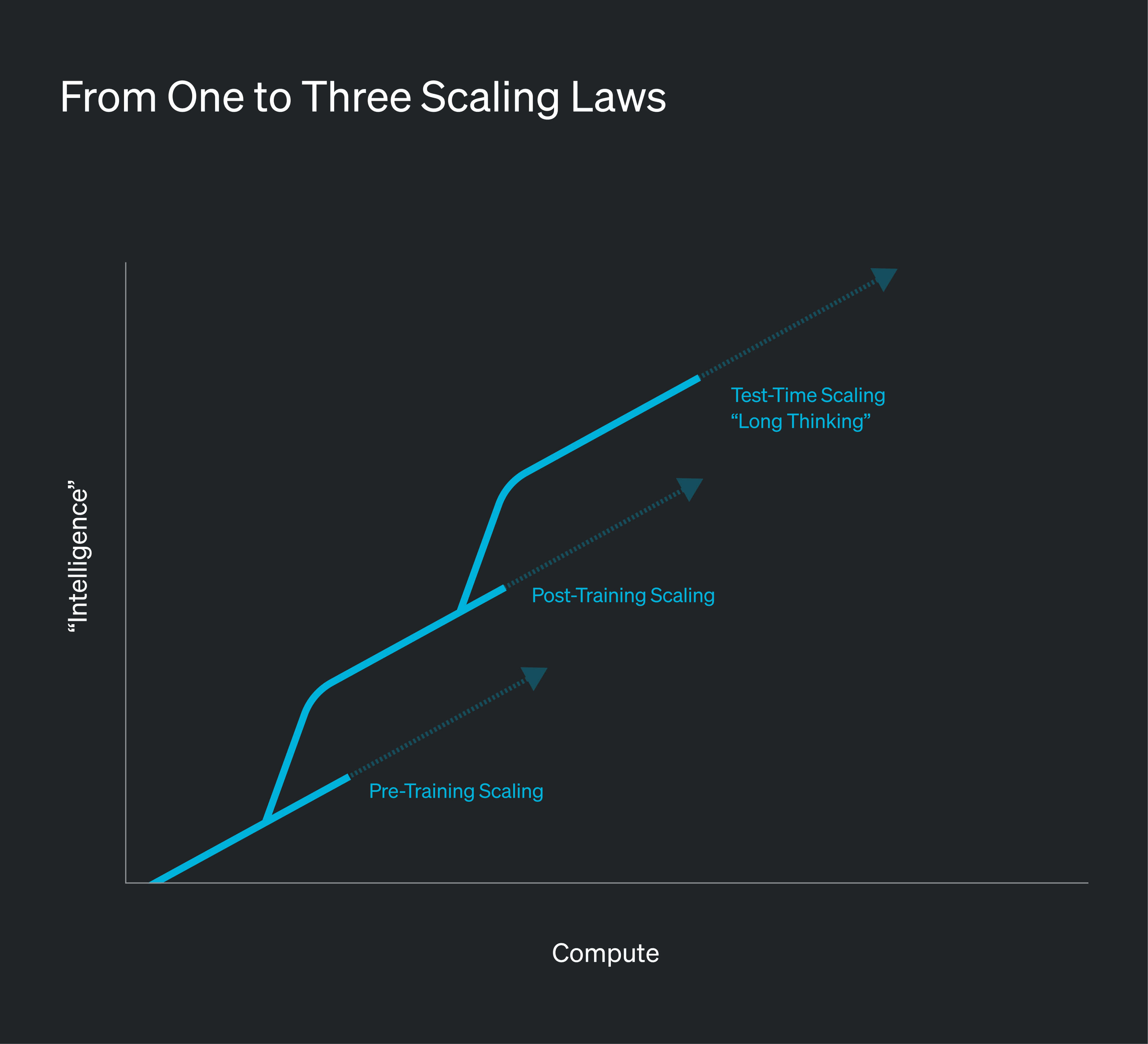

The rapid growth of AI is driven by compute deployment at an unprecedented pace. AI scaling laws show that larger models trained with exponentially more compute consistently achieve better performance. For leading technology companies, the implication is clear: the most effective way to improve AI systems is to dramatically increase the compute devoted to training.

As AI has matured, these scaling dynamics now extend beyond pretraining to post-training and inference workloads, further amplifying demand for accelerated compute. Frontier labs and AI cloud providers are racing to build multi-gigawatt clusters. In the U.S., AI-related power demand is projected to rise from roughly 3 GW in 2023 to over 35 GW by 2026.

However, this unprecedented digital expansion has collided with a constrained U.S. electric grid. Traditional “firm” connections can take five years or more due to transmission upgrades, supply chain bottlenecks, engineering studies, and backlogged interconnection requests. In an industry where model generations become obsolete in under a year and a single gigawatt-scale data center can generate $40–50 billion annually, waiting imposes an unacceptable opportunity cost. To accelerate deployment, operators increasingly adopt a “Bring Your Own Generation” (BYOG) strategy, deploying behind-the-meter power to bypass grid delays.

The Complexities of Behind-the-Meter Power Solutions

Behind-the-meter power bypasses grid bottlenecks but introduces significant engineering and economic challenges. AI demand has exhausted equipment supply through the end of the decade, while permanent solutions such as CCGT plants require over 24 months for commissioning. Scaling traditional assets for onsite prime power also presents a persistent balancing act for operators.

Industrial Gas Turbines (IGTs)

Industrial gas turbines (IGTs) face significant availability constraints, with lead times now ranging from 18 to 36 months. These delays stem from finite manufacturing capacity and supply constraints for turbine blades and cores. Production of these critical components relies on exotic single-crystal nickel superalloys and specialized high-temperature ceramics that remain in global short supply.

Operational characteristics also limit their suitability for AI workloads. Standard single-shaft turbine designs directly couple the generator and compressor. Sudden increases in computing demand impose braking torque on the generator, briefly reducing compressor airflow at the moment additional power is required. This can trigger compressor stall and significant voltage sag, with recovery times approaching 30 seconds.

IGTs also ramp slowly, often requiring 20 minutes or more from cold start to full output, making them better suited for steady baseload generation than the highly variable loads of AI clusters. Maintaining stable power quality therefore requires supplemental battery storage and natural-gas reciprocating engines to absorb rapid load swings. This hybrid architecture increases cost and operational complexity, while supply constraints and limited flexibility make such deployments difficult to scale. Additionally, their limited ability to serve as a backup resource further raises the risk of stranded assets once grid capacity becomes available.

Reciprocating Internal Combustion Engines (RICE)

Reciprocating generators have long served as data center backup systems, efficiently handling block loads, transients, and partial-load operation. While they avoid the rare-earth alloy constraints of turbines, lead times still run roughly 18–24 months.

At gigawatt scale, emissions pose a major constraint. Even the cleanest natural gas engines produce significant pollutants, complicating air permitting and slowing deployment. Compliance typically requires Best Available Control Technology (BACT) aftertreatment systems, which add cost and operational complexity while still failing to fully overcome site-specific capacity limits.

These challenges are compounded by mechanical demands: large installations require hundreds or thousands of engines, each with thousands of moving parts operating under rapid stop-start cycles. This cyclic loading accelerates component wear, necessitating frequent servicing and oil changes. Together with emissions constraints, these factors create continuous maintenance needs, higher lifecycle costs, and increased operational complexity.

Solid-Oxide Fuel Cells (SOFCs)

Fuel cells offer quiet operation, low local emissions, and relatively fast installation timelines. However, they also face significant economic challenges. Current systems carry capital costs approaching $4,000 per kilowatt, making large-scale deployments extremely expensive. In addition, these systems experience gradual performance degradation over time and require expensive stack replacements every 5–6 years. These lifecycle costs make them difficult to justify for installations requiring hundreds of megawatts of continuous power.

The Unique Power Quality Challenges of AI Workloads

Beyond sheer scale, AI workloads introduce unprecedented power quality challenges.

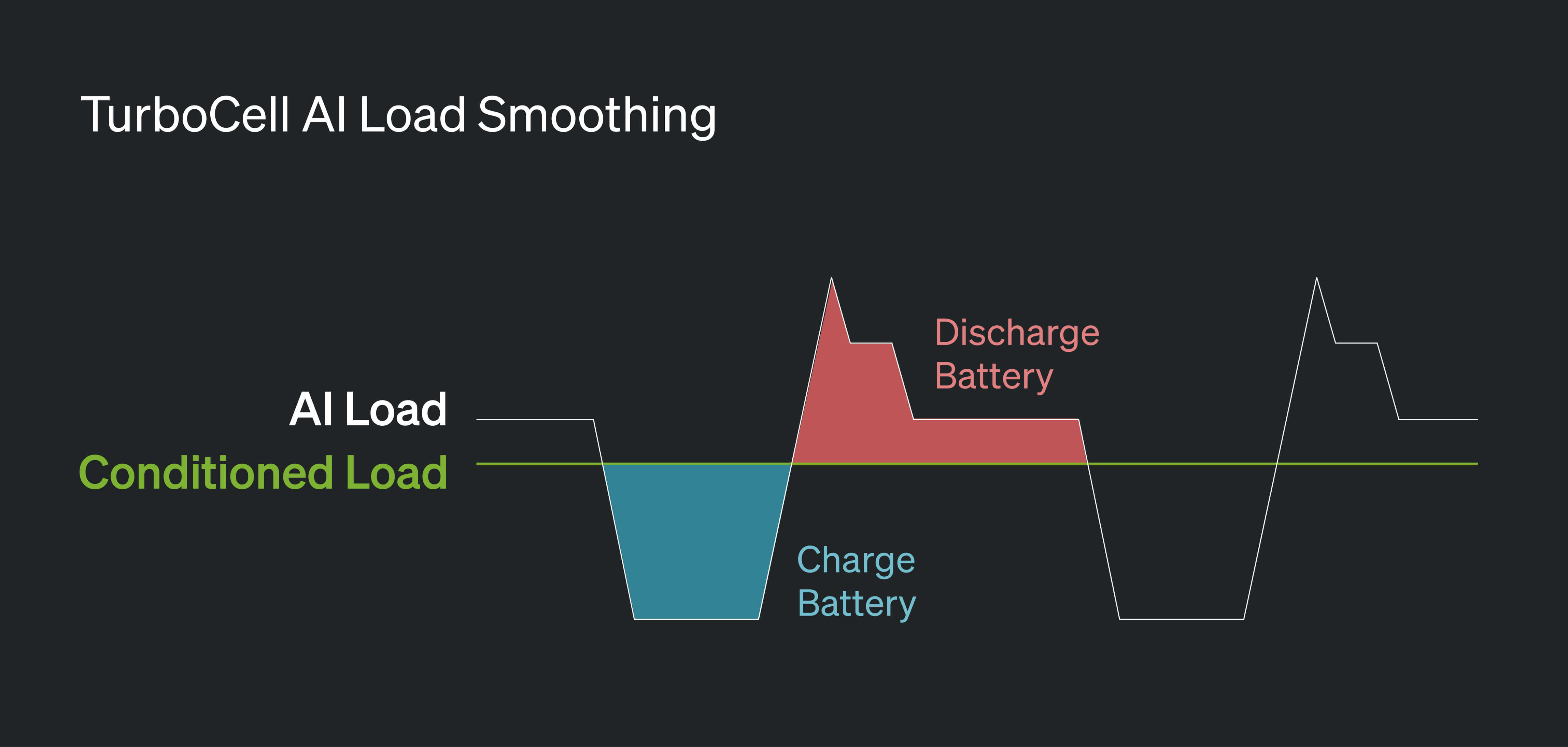

Traditional cloud workloads consisted of many largely uncorrelated CPU-based tasks distributed across millions of virtual machines. This diversity naturally smoothed demand, resulting in relatively stable data center power loads. Large-scale AI training operates very differently. Most models rely on bulk-synchronous parallel (BSP) computing, where tens of thousands of GPUs execute in lockstep, producing a phenomenon known as synchronous volatility.

During compute-intensive matrix operations, power demand can surge to maximum capacity. During communication and synchronization phases, demand can fall by as much as 70% within fractions of a second. These rapid current swings create severe voltage sags and swells that can trigger Undervoltage Lockout (UVLO) protections in servers or nuisance breaker trips, potentially interrupting hours of training progress.

To prevent these failures, operators often rely on software and firmware workarounds that keep GPUs artificially busy during idle phases, consuming energy without producing useful computation. At gigawatt scale, this “artificial loading” can waste hundreds of thousands of MWh annually. Energy storage is also deployed to buffer these fluctuations, but its placement involves trade-offs. Rack-level storage reduces local instability but consumes valuable white space and adds structural weight, while centralized storage higher in the power hierarchy exposes critical infrastructure—such as UPS systems and PDUs—to voltage perturbations and increases the potential failure domain of a single fault.

The TurboCell Solution: Purpose-Built Power for AI

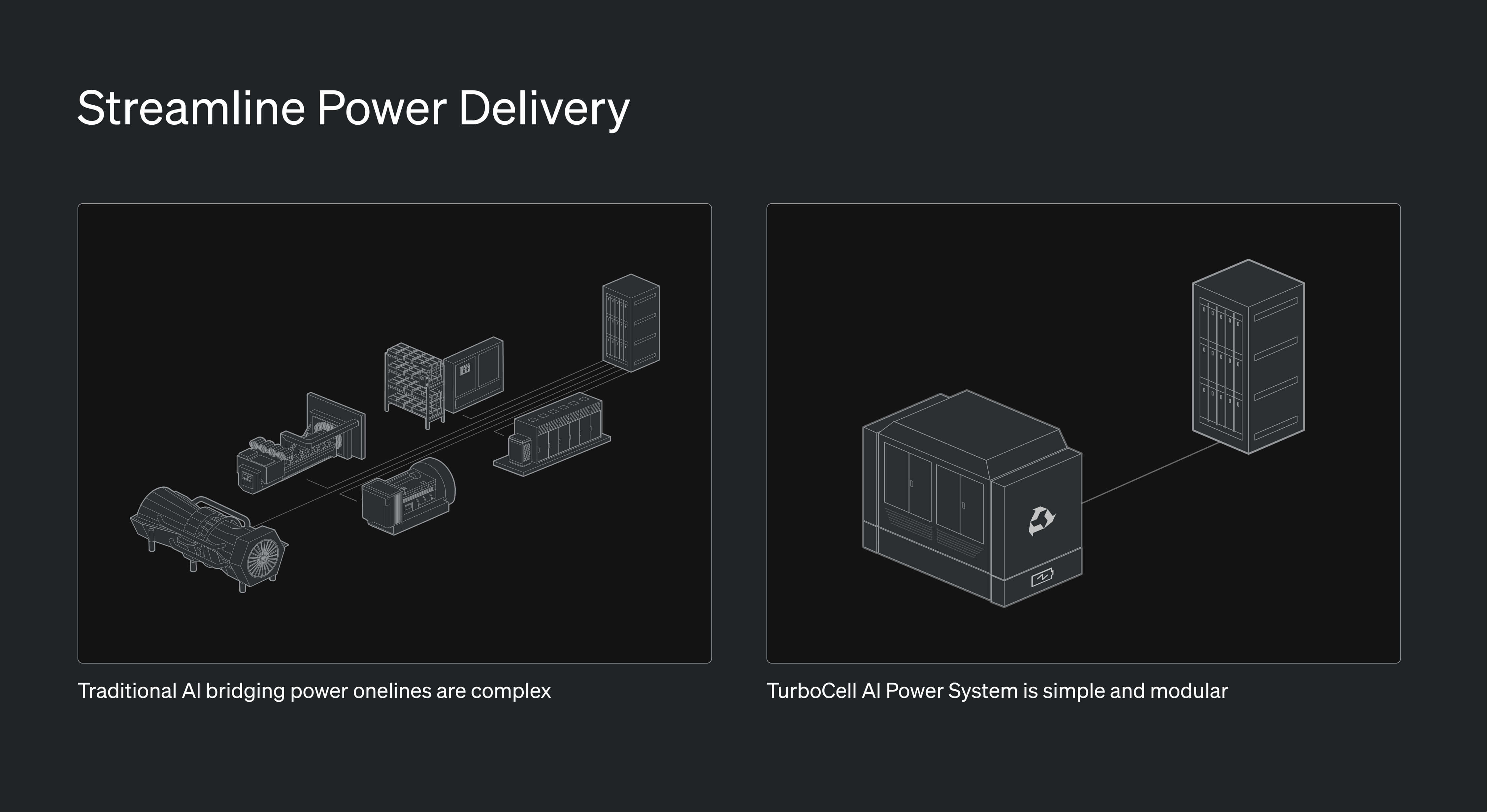

To bridge the gap between legacy power infrastructure and AI workloads, Endeavour is deploying the TurboCell hybrid power system at gigawatt scale in 2027. The platform combines a recuperated Brayton-cycle turbogenerator with a native hybrid battery DC architecture optimized for AI data centers.

TurboCell is a multi-fuel system—capable of running on natural gas, hydrogen, or diesel—and can provide prime, bridging, or backup power. By spanning the full power delivery continuum, a single system enables rapid capacity deployment while maximizing long-term value across the AI infrastructure lifecycle.

Automotive-Scale Manufacturing and Supply Chain Resilience

To avoid supply constraints associated with heavy-duty turbines, Endeavour partnered with BorgWarner to leverage its expertise in turbocharger manufacturing and its portfolio of mass-produced industrial powertrain components. Fully integrated and assembled in North America as standardized modular units, it provides scalable onsite generation without the complexity of site-specific construction. Using the automotive industry’s global supply chains, mature component ecosystems, and high-throughput manufacturing, TurboCell shortens deployment timelines and enables consistent execution at multi-gigawatt scale, shifting power generation from bespoke project engineering to repeatable industrial production.

Decoupled Power Spool for Instant Transient Response

TurboCell employs a patented split-shaft (triple-spool) architecture that separates the engine into two mechanically independent rotating assemblies. By decoupling the generator spool from the power spools, the system fundamentally changes the transient behavior that limits traditional single-shaft turbines. During a large load step, only the generator spool slows while the power spools—mechanically isolated from the electrical load—continue operating at optimal speed and airflow. This creates an inverse relationship between system energy and generator load, allowing the system to respond rapidly to large block loads without risking compressor stall.

Integrated Battery for AI Load Smoothing and Power Quality

TurboCell incorporates an integrated battery system to manage high-frequency load fluctuations. The battery absorbs millisecond-scale transients while the split-shaft turbine supplies sustained megawatt-scale power enabling the system to handle both rapid load spikes and continuous generation.

This architecture also enables close coupling with data-center racks. By allowing the low latency placement of bulk capacitance external to the rack TurboCell frees valuable compute space and eliminates the need for energy-intensive GPU burn mechanisms. Additionally, rectifying low-voltage AC directly to a common DC bus removes the neutral conductor, preventing triplen harmonic accumulation, while the inverter isolates electrical distortion from upstream infrastructure.

Emissions and Reliability

TurboCell eliminates the high-wear friction interfaces found in reciprocating engines, reducing maintenance frequency and downtime. Longer service intervals—such as oil changes every 16,000 operating hours—combined with a modular design and fewer moving parts lower labor requirements and increase overall system availability.

An external combustor isolates the turbo from thermal stress and enables very low emissions. At ~0.03 g/kWh NOₓ, TurboCell produces roughly 86% less NOₓ than typical IGTs without aftertreatment and outperforms BACT systems by ~20%, simplifying permitting and allowing operators to maximize onsite generation capacity.

Future-Proof Power for the AI Era

AI is driving an unprecedented surge in electricity demand that traditional grids cannot keep pace with—and conventional onsite generation assets were never designed to support. TurboCell addresses this gap with an AI-optimized power architecture built to accelerate time-to-power while simplifying operations at scale. Designed specifically for next-generation data centers, the system delivers the flexibility, reliability, and long-term economics required to power the future of AI infrastructure.

.jpg)