A simple rule now governs AI development: bigger models trained with more compute consistently perform better. Researchers refer to this relationship as the AI scaling laws, and it has reshaped how companies build more capable systems.1

The implication is straightforward: the most reliable way to improve AI performance is to dramatically increase the amount of compute used to train models. As a result, frontier AI labs and cloud providers are deploying ever larger multi-gigawatt computing clusters, committing hundreds of billions of dollars to the infrastructure required for next-generation AI.2

The United States has become the global hub for this expansion. Its technological and AI hardware leadership, deep capital markets, geographic scale, and supportive regulatory framework make it uniquely suited to host the massive data center campuses required for frontier AI development.

But this unprecedented digital expansion has collided with a stubborn physical constraint: the U.S. electric power grid.

Slow infrastructure expansion and supply chain bottlenecks mean that data centers seeking traditional “firm” grid connections often face interconnection delays of 5+ years. In an industry where model generations become obsolete in less than a year, waiting half a decade for power is effectively a nonstarter. This challenge has spurred growth in behind-the-meter generation, and it is also driving the adoption of a new operational model that could benefit the broader grid.3

The Key Concept: Curtailment-Enabled Headroom

In simple terms, this means temporarily reducing the electricity drawn from the grid during rare periods of stress. While this could involve strategies such as curtailing, pausing, or redistributing workloads, it is entirely possible to implement without interrupting computing operations.

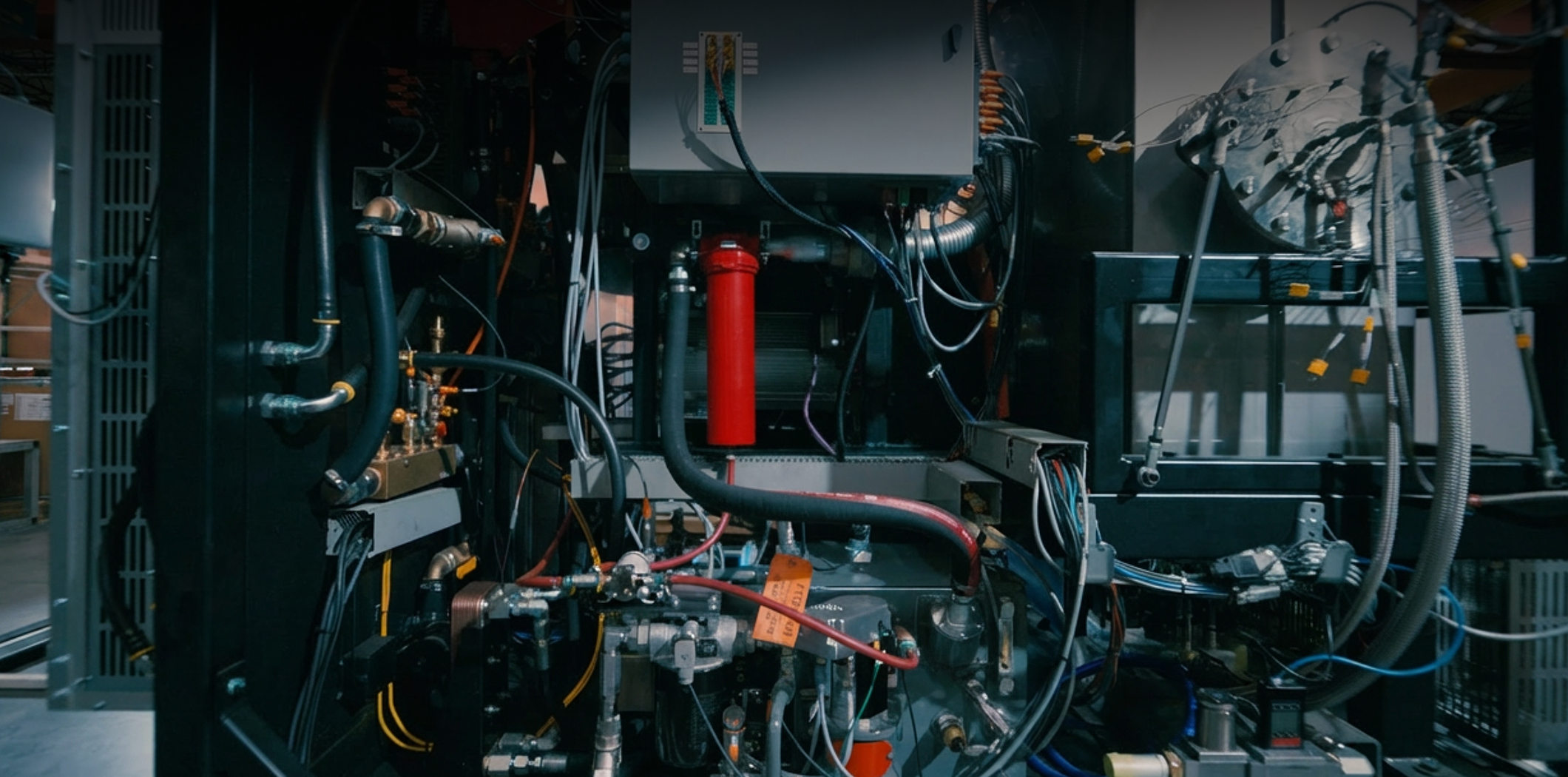

Facilities achieve this by seamlessly switching from the electric grid to behind-the-meter resources, such as TurboCell, which can instantly and reliably power the entire campus. When grid conditions tighten, the data center can temporarily “island” itself. From the grid operator’s perspective, the facility has disappeared from the grid, alleviating pressure on the system by reducing demand. Inside the data center, however, operations continue uninterrupted.

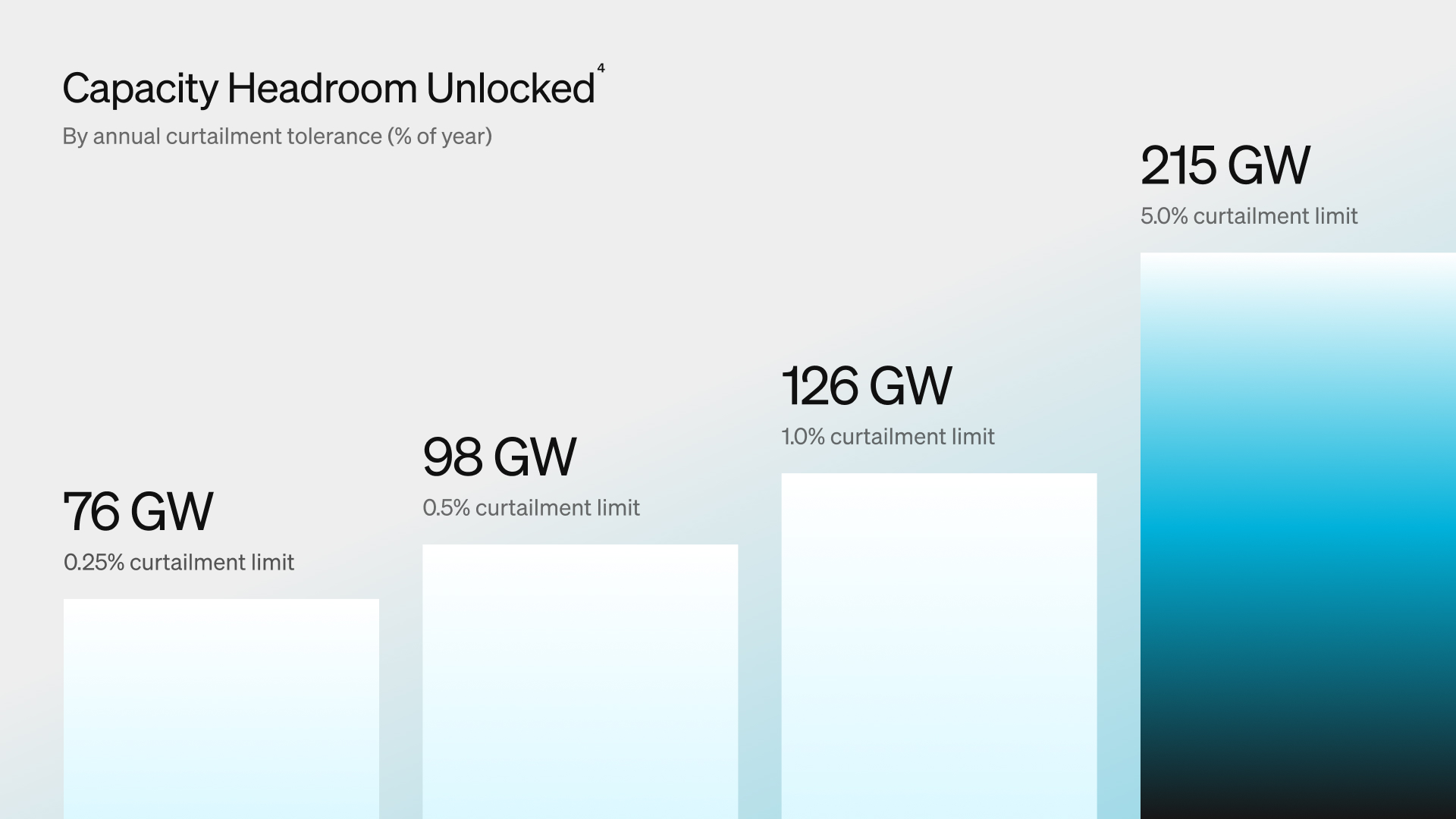

This approach unlocks what researchers call Curtailment-Enabled Headroom4—the additional load the existing grid can accommodate if large consumers agree to reduce their demand during peak stress events. Even very small periods of curtailment can unlock enormous capacity.

For example, if new data centers relied on on-site generation for just 87 hours per year, the existing grid could support a dramatic increase in compute capacity without needing to build any additional generation capacity.5

The Broader Benefit: Higher Grid Utilization and Lower Costs

Flexible data centers are not just beneficial for AI Infrastructure providers, but they can also benefit everyday electricity customers.

The U.S. power system operates with a surprisingly low load factor, averaging about 53%. Infrastructure is built to meet extreme peak demand, leaving much of it underutilized for the rest of the year. When a large data center demands a 24/7, inflexible or ‘firm’ power connection, it raises peak load, potentially triggering expensive upgrades to power plants, transmission, and distribution infrastructure. These costs are often socialized across all customers.6

By operating on grid power during most hours and switching to on-site generation only during peaks, flexible data centers fill the grid’s idle valleys without increasing the peak. This spreads fixed costs across a larger base, improving efficiency and potentially lowering electricity prices for everyone.

Why Hasn’t Demand Flex Been Used at Scale Before?

Despite the clear benefits, flexible operations have historically been rare. The barriers are primarily legacy technology and the perception of operational risk.

Data centers typically operate under strict service-level agreements (SLAs), guaranteeing uptime and performance. Historically, deviating from grid power was perceived as putting those guarantees at risk. Moreover, most on-site generation consisted only of Tier II diesel backup generators, designed as insurance rather than an active resource. Strict air-quality regulations prevented use of such generators for anything other than backup power during a grid outage, leaving gigawatts of installed capacity unused while the grid bore the full burden of peak demand.7

What’s Changed: AI Workloads and Modern Power Technologies

Two major shifts are reshaping the equation: the rise of GPU-based AI workloads and the evolution of on-site power technologies.

Traditional data centers primarily handle real-time CPU-driven tasks—services like web search, financial transactions, or streaming applications that demand extremely high facility uptime. The AI boom, however, is powered largely by GPU-based training workloads, which behave very differently. Unlike traditional web services, where a single node failure can be mitigated through application-level redundancy, AI training is a tightly coupled workload: all GPUs must synchronize at every training step. Even a single component failure—such as a memory error or a network “flap”—can stall the entire cluster. At the scale of tens of thousands of GPUs,8 traditional facility-level, high-availability guarantees become statistically impossible and ultimately redundant.

At the same time the widespread adoption of behind-the-meter generation for AI campus environments has markedly increased infrastructure provider comfort with non-grid connected operation. When coupled with relaxed SLAs for AI campus environments, operators are far more comfortable temporarily curtailing grid demand.

Finally, modern systems like TurboCell offer dramatically cleaner emissions than diesel generators, enabling them to operate as prime, flex-load or backup power at AI campus scale. With its advanced micro-grid controls providing plug-and-play simplicity for grid-interactive operations TurboCell can take over an entire facility’s load in minutes, without complex cutovers or workload disruption. With fast-start block loading performance, built-in UPS, and flexible fuel options, it provides unprecedented operational flexibility for flex-load data centers of any scale.

What Still Needs to Happen

Although the technology is ready, scaling flexible AI data centers requires coordination between AI cloud providers, utilities, and regulators.

1. Implement New Interconnection Rules

Grid operators and AI cloud providers need closer alignment on an interconnection process that addresses the needs and concerns of all stakeholders. The current framework has not enabled adoption at meaningful scale. AI cloud providers seek interconnections that can be completed in months rather than years, while grid operators require more effective mechanisms to prioritize legitimate requests and ensure system stability as gigawatts of new load are added. Load that is firmly committed to its delivery timeline and with enforceable curtailment capability should be prioritized for expedited interconnection.

2. Expand Integrated Resource Planning

Current Integrated Resource Planning does not account for the volume and concentration of curtailable load that large-scale AI campuses introduce to the grid. While the growth of behind-the-meter generation presents a significant opportunity for a more balanced and dynamic power system, sudden load fluctuations at this unprecedented scale could create voltage and transmission instability, with potentially severe consequences. To address this challenge, AI cloud providers and grid planners must move beyond siloed operations. Deep, co-simulated modeling is essential to ensure that flexible operations enhance rather than threaten grid reliability. Additionally, a new set of industry best practices, established chain of command and recognized software control platforms are needed to coordinate grid-interactive responses during peak events.

A New Role for Data Centers on the Grid

If utilities, AI cloud providers, and regulators keep pace with the technology, flexible AI data centers could reshape the U.S. power grid—making it more resilient, responsive, and efficient. By blending grid supply with behind-the-meter generation, these facilities can work around interconnection bottlenecks and reduce peak demand, improving overall system utilization without requiring multi-billion-dollar infrastructure buildouts. AI data centers should no longer be treated as a grid liability—they should be recognized and enabled as one of its most powerful, fast-acting sources of flexibility.

1 Kaplan, J., McCandlish, S., Henighan, T., et al. (2020). “Scaling Laws for Neural Language Models.” arXiv:2001.08361. https://arxiv.org/abs/2001.08361. See also NVIDIA, “How Scaling Laws Drive Smarter, More Powerful AI,” https://blogs.nvidia.com/blog/ai-scaling-laws/.

2 Goldman Sachs, “Why AI Companies May Invest More than $500 Billion in 2026” (2025), https://www.goldmansachs.com/insights/articles/why-ai-companies-may-invest-more-than-500-billion-in-2026; OpenAI, “OpenAI, Oracle, and SoftBank expand Stargate with five new AI data center sites” (2025), https://openai.com/index/five-new-stargate-sites/; Data Center Frontier, “Scaling Stargate: OpenAI’s Five New U.S. Data Centers Push Toward 10 GW AI Infrastructure,” https://www.datacenterfrontier.com/machine-learning/article/55319132/scaling-stargate-openais-five-new-us-data-centers-push-toward-10-gw-ai-infrastructure.

3 Lawrence Berkeley National Laboratory, “Queued Up: Characteristics of Power Plants Seeking Transmission Interconnection” (2025), https://emp.lbl.gov/queues. See also Camus Energy, “Why Does It Take So Long to Connect a Data Center to the Grid?” https://www.camus.energy/blog/why-does-it-take-so-long-to-connect-a-data-center-to-the-grid.

4 Norris, T., Profeta, T., Patiño-Echeverri, D., & Cowie-Haskell, A. (Feb. 2025). “Rethinking Load Growth: Assessing the Potential for Integration of Large Flexible Loads in US Power Systems.” Nicholas Institute for Energy, Environment & Sustainability, Duke University. https://nicholasinstitute.duke.edu/articles/study-finds-headroom-grid-data-centers.

5 Norris, T., Profeta, T., Patiño-Echeverri, D., & Cowie-Haskell, A. (Feb. 2025). “Rethinking Load Growth: Assessing the Potential for Integration of Large Flexible Loads in US Power Systems.” Nicholas Institute, Duke University. The study finds the largest 22 U.S. balancing authorities could accommodate 76 GW of new load with 0.25% annual curtailment (~85 hours/year), 98 GW at 0.5%, and 126 GW at 1.0% (~87 hours of full-equivalent curtailment, since 1% of 8,760 hours ≈ 87.6 hours). See https://nicholasinstitute.duke.edu/articles/study-finds-headroom-grid-data-centers and Utility Dive, https://www.utilitydive.com/news/us-grid-headroom-flexible-load-data-center-ai-ev-duke-report/739767/.

6 Norris et al., “Rethinking Load Growth” (2025), Nicholas Institute, Duke University. The report estimates the average load factor of the U.S. power system at approximately 53%, meaning roughly half of existing generation and transmission capacity is unused in any given hour. See also Power Magazine, “Duke Researchers: Grid Flexibility Key to Accommodate Load Growth,” https://www.powermag.com/duke-researchers-grid-flexibility-key-to-accommodate-load-growth/.

7 Inside Climate News, “Data Centers’ Use of Diesel Generators for Backup Power Is Commonplace—and Problematic” (Nov. 2025), https://insideclimatenews.org/news/12112025/data-center-diesel-generators-noise-pollution/; Virginia Mercury, “Lawmakers debate how to regulate data centers’ diesel backup generators” (Feb. 2026), https://virginiamercury.com/2026/02/17/lawmakers-debate-how-to-regulate-data-centers-diesel-backup-generators/. EPA Tier II standards permit operation only during emergencies; Tier IV-equivalent emissions controls are required for prime or extended runtime use.

8 Patel, D., et al., “Power Stabilization for AI Training Datacenters,” arXiv:2508.14318, https://arxiv.org/html/2508.14318v1; Together AI, “Optimizing Training Workloads for GPU Clusters,” https://www.together.ai/blog/optimizing-training-workloads-for-gpu-clusters; HPCwire, “Clockwork.io Introduces Live GPU Migration for AI Cluster Failures” (March 2026), https://www.hpcwire.com/2026/03/11/clockwork-io-introduces-live-gpu-migration-for-ai-cluster-failures/.